There is currently a lot of debate around the necessity of regulating AI. Recently, Geoffrey Hinton, apparently referred to as the "Godfather of AI", resigned from Google to be able to freely warn mankind of the potential perils ahead. In March, an open letter signed by luminaries such as Steve Wozniak and Elon Musk called for a pause in the development of advanced AI models. Now, both the FTC and the White House are getting involved in the debate about AI regulations.

So, should we be worried about AI?

When people express concern about AI, they often fear that AI software will take over their jobs. For instance, ChatGPT can write articles faster than humans and thus producing credible, if not always accurate, content. Another commonly cited fear is that AI will become self-aware and realize that many of the world's problems could be solved by removing humans from the equation. Being super-intelligent and super-fast, it could crack the nuclear codes or release a deadly virus, among other catastrophic scenarios.

While such scenarios may seem far-fetched, there many more pragmatic scenarios that would test out trust. Robotic surgery has been around for years. Currently, these surgical systems are guided by highly trained human surgeons. However, since the surgical apparatus is digitally controlled, it's easy to imagine that an AI system could take over these controls. Presumably, an AI system would make fewer mistakes, never get tired, and perform with a precision and accuracy that humans cannot match. But if you were the patient, would you trust such a system?

This is the big question, isn't it? Perhaps you'd trust it to operate on your knee, but not on your brain. What makes you trust a human surgeon today? Is the surgeon having a good day? Have you ever refused a doctor in favor of another one? What if you had no say in the decision? That's what it means to trust an AI.

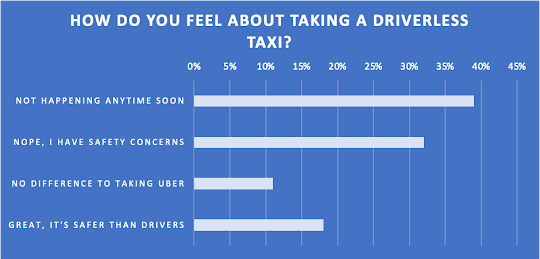

To explore this question further, I conducted a small survey by posting a similar question to my LinkedIn followers. Instead of the medical scenario above, I picked something less personal: using a taxi. Most of us have taken a taxi at some point and have probably considered safety and trust in doing so. But what if the taxi was driven by an AI instead of a human driver? How would you feel about it? Here are the results:

The first thing you may notice is that most respondents, 39%, don't expect this to happen anytime soon. That's quite surprising given the excitement around AI and the fact that Waymo is already operating fully autonomous rides in Phoenix and San Francisco. Autonomous vehicles have been a hot topic for years, and Tesla even offers Full Self-Driving Capability as a rather pricey add-on. Perhaps my wording was misunderstood (the answers are limited to 60 characters), but whether you like it or not, you might be picked up by a driverless taxi quite soon.

The second group of respondents, 32%, were skeptics who were understandably concerned about their safety. Perhaps they've watched the series Upload, where an autonomous vehicle commits a murder. Whether this could happen due to a malfunction or the intervention of a bad actor, being harmed by an AI in a car seems quite feasible.

The third group was the smallest, with 11% of respondents stating that they don't see much difference between taking a human-driven taxi and an AI-driven one. I can certainly see their point. After all, taking a human-driven taxi is not without risks. In my travels around the world, I've encountered my share of sketchy drivers, cars, and neighborhoods. An AI, while not completely safe, might not be any riskier than some human-driven rides.

The final group are the optimists with 18%. They believe that an AI will do a better job than humans. This is a very compelling point of view. Most accidents today are caused by human error, and logically, removing humans from these situations would almost certainly prevent those accidents. AI algorithms are already very good and will continue to improve. They are faster and more accurate than humans in their ability to evaluate risky situations and react. If only not for all those other drivers on the road...

Overall, it's clear that we humans are divided about our level of trust in AI. The skeptics who answered "not happening any time soon" are effectively not trusting that AI is ready for any use cases where humans could be harmed. Only less than 30% of people are positive or at least neutral in their trust in AI. My sample in this survey wasn't very large (only 62 responses), but hey, I'm sure that Gartner published $5,000-reports based on a sample like that.

Now, how did I vote? I admit that I am a little concerned about safety. I've seen a lot of software built and sold for production use cases, and I know that such software always has bugs and security vulnerabilities. At the same time, I do believe that our roads would be safer if every car was driven by an AI. It's just the time where might car might be the only one driven by an AI that worries me a lot.

With all the money pouring into AI these days, it will be interesting to watch!

No comments:

Post a Comment